Stop Treating Every Bug the Same Way

The Realization

An update deadline was approaching and beta player reports were stacking up fast. Characters would suddenly start jittering, snapping to different positions mid-fight. It happened very rarely, but when it did it was catastrophic. We couldn't manage to reproduce it, yet videos were surfacing of players losing kills when it triggered at the perfectly wrong time. It was bad.

I was the fixer, the last line of defense to hitting our deadlines. Two other engineers had already spent days on it.

> Engineer A: "I'm not 100% sure but it HAS to be in one of these two functions. These are the only places we teleport the player like in the bug videos."

> Engineer B: "Honestly this is a wild one. I think it's a memory corruption or something nondeterministic. I doubt we'll find in time for the deadline."

I was confident going in. This was my role on the team, the last line of defense for bugs before a big release. But within hours, I had unknowingly stepped into the same trap they had. My approach was something like:

- Analyzed the two functions Engineer A had identified, looking for possible edge cases. Found nothing.

- Started a refactor to make those functions "more stable". Abandoned that when it felt unlikely to work.

- Hypothesized increasingly far-fetched theories with Engineer B.

- Finally tried to reproduce what I saw in the bug videos. It was rare so I started running the test on repeat.

Let's pause here, this was the WRONG approach. Yet it felt like disciplined debugging. In fact, parts of it were good:

- I got up to speed on relevant code.

- I spoke with domain experts.

- I read reports and tried to reproduce the issue.

And yet, none of it moved the bug forward. Why?

The problem wasn't effort or discipline. It was a deeper mistake: I was using the wrong best practices for this kind of bug.

The Bug Taxonomy

After stumbling through this bug, and many others like it, I realized something deceptively simple: a process that works really well for one type of bug can make no meaningful progress on another.

Over time, I came up with three categories around which bugs behaved and should be tackled differently:

- Easy bugs (Intuition bugs) Where intuition and local reasoning are usually sufficient.

- Medium bugs (Evidence bugs) Where intuition is unreliable, and progress requires following the program's real execution path.

- Hard bugs (Scale bugs) Where individual reasoning does not scale, and systematic or external leverage is required.

What's the difference?

Easy / Intuition bugs are the default, every day type. Their user impact hints at their locition and cause.

- Clear reproduction

- Localized failure

- Domain expert can often predict fix before running code

Medium / Evidence bugs are less frequent. Their source is often unclear. They hide in unexpected places and often only are revealed by concrete evidence in the execution state: logs, traces, or captured frames.

- Reproduction inconsistent

- Competing plausible explanations even from domain experts

- Logs contradict intuition

- Fix attempts change symptom but not eliminate it

Hard / Scale bugs are very rare; they may be encountered only a handful of times in a project. They often involve low level libraries or long-running production systems. These are the bugs that generate horror stories on Reddit. They’re usually solved through escalation: large-scale monitoring, expensive test infrastructure, or highly specialized expertise.

- No reliable developer reproduction

- Time-dependent

- Load-sensitive

Despite learning many debugging techniques and tools I'd never come across a taxonomy of when to change approaches. Experienced engineers handled bugs differently but rarely explained why, it was like a switch built into their brain but not communicated in words.

What I've found is that understanding of Medium / Evidence bugs is where the biggest opportunity is.

Engineers naturally improve at Easy / Intuition bugs. Hard / Scale bugs are few and far between. Learning to recognize Evidence bugs (and stop treating them like Intuition bugs) was the single most effective way I leveled up my debugging.

For the rest of this article, I'll cover how my process changes for an Evidence bug and why it's effective.

Evidence Bugs - The Process

The defining trait of a Medium / Evidence bug is this: you cannot reason your way to the answer without observing what the computer is actually doing. I found five repeatable practices that consistently steered me in the right direction.

Practice One: Define the Problem on Paper

Before I touch a debugger or open a file, I pull together the facts and my assumptions. This prevents me from jumping into debugging auto-pilot.

I force myself to write down my diagnosis.

- What I know is true

- What I think might be true

- What I am assuming without evidence

It may seem unnecessary at first, but writing forces clarity. Evidence bugs hide in vague language like this:

- “Sometimes the player jitters.”

- “It seems random.”

- “Probably related to networking.”

Writing forces precision:

- Under what conditions does it happen?

- What state must already be true for the bug to appear?

- What is the last observable correct behavior before failure?

Answers that can't be articulated become a checklist of where to start debugging and what knowledge gaps I need to fill. I can slow down to seek critical information from QA or other engineers and poke holes in my own assumptions.

Practice Two: Investigate in 30 Minute Cycles

After defining the problem, I aim to enter a productive, forward moving loop. Evidence bugs lure engineers into time consuming, unproductive activities like reproducing the same thing multiple times while deep in thought and chasing red herrings. Look away from the clock and 4 hours disappeared. Poof.

I find it immensely helpful to plan and execute in short intervals. I do this with the Pomodoro Technique using 5 minutes to plan/review instead of taking a break. However, even if you do something different the critical part is to self-evaluate and correct: every short interval aim to do something that produces a productive result.

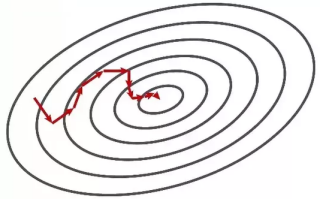

Frequently intervals course correct me towards the most promising direction. I think of it like a gradient descent where the root cause is at the low point.

Steering questions:

- What test will I run?

- What signal am I expecting?

- How will the result narrow the search space?

The goal is to rule out the largest section of possibility space with every test. If I can't explain how the next action moves me closer to the root cause, I don’t do it.

- “Logging input to function X will determine if corruption occurs before or after.”

After the 30 minutes is up I do a lightning review. Was it a success? Did I learn anything new in that time? Did I make forward progress? Then it's onto planning the next iteration.

Practice Three: Make Reproduction Cheap (15 Seconds or Less)

Iteration speed is everything for Evidence bugs. Reducing the possibility space is limited by how quickly I test and eliminate possibilities.

I optimize for:

- Deterministic reproduction

- A tight loop: run → observe → adjust

My rule of thumb is 15 seconds or less from change to validation (compile, play, reproduce), or as close as we can get with project limitations. This means I need the game to compile quickly, debug tooling to teleport to specific situations, and the ability to locally spin up multiplayer or backend services.

Debugging can often reveal that it's the tools making the bug hard over the bug itself, and that is worth being addressed.

Once reproduction is cheap I can dive through the possibility-space of the codebase.

Practice Four: Bisect the Search Space Ruthlessly

After fast reproduction, bug searches can often turn mechanical. The goal is to isolate the problem to understand it and not fall into false intuition traps.

Bisecting possibilities treats the code like a black box, making steady progress towards the root cause. I aim to reduce the code-space by half or more when a breakpoint is hit or when checking a theory on what data will be recorded in a stack trace or log.

I find it most helpful to write the theory + possible outcomes when looping to self-check that I'm really maximizing the value of each test. I think in terms of data transformations. Every wrong output came from a transformation of some input. My job is to work backward: where did the correct data first get overridden wrongly? What code can set this data?

It may seem unproductive at first to cut the codebase in half every 30 minutes, but even if completely clueless from start to finish you could narrow a codebase of 4 million lines down to 1 line in 2 days (4,000,000 lines ≈ 2^22, 22 iterations = 11 hours or ~1.4 eight hour work days). If you could upper-bound all root cause investigations to 2 days you'd be a world class fixer.

Of course in practice bisecting is rarely as clean as this theoretical value, but I find it to be a helpful anchor to guage my own efficiency.

Practice Five: Reflect

An incredible result of the above practices is they generate a chronological record of what actually happened from beginning to end, letting me debug myself and my process.

Once I've solved the bug I can ask myself what I could have done differently:

- Did I articulate the problem well enough at first?

- How often did my 30 minute iterations not make forward progress?

- What debugging or reproduction tools would have cut down time?

I find that most of the time I wasn't perfect. Sometimes it's very obvious what I could have done differently and I can incorporate that learning into similar bugs. This step improved my debugging skills most and allowed me to refine and simplify the other practices.

The Mindset Shift

My practices aren't revolutionary, they simply help me be more deliberate during the bug fixing process.

My mindset:

- Stop theorizing early, don't solve problems as I am diagnosing them

- Progress in short, deliberate increments

- Let evidence drive the investigation

- Deliberately iterating is faster than speculatively accelerating

In my original bug, none of my steps were wrong in isolation. They were simply out of order. I was reasoning before observing, refactoring before validating, and hypothesizing before seeing the data. Evidence bugs just don't work that way.

When This Process Works (and Doesn't)

These practices have proven their effectiveness to me while I refined them over the last 7 years. That said, they weren't ideal for every situation which is why I rely on the bug taxonomy.

For Intuition bugs, the practices of articulating initial assumptions, nailing down fast reproduction, and bisecting a search space are too slow. I don't recommend treating every bug as an Evidence bug. Instead I recommend getting better at identifying the signals to switch into this mode. Here's a few examples:

- 1-2 initial intuition ideas turn out wrong

- The list of "likely culprit" systems keeps getting appended

- Another engineer got stuck and escalated it

- Disagreement about reproduction steps

- Notice "spinning" - no forward progress after some time

- Difficulty in describing what's happening or when it starts

- Lack of domain expertise on the team

Thinking about taxonomy of a bug from the start guides me to the right tool for the job.

Modern Tooling and AI

I've found AI has great potential to augment this process.

The practice of slowing down, articulating assumptions, and validated iteration has direct parallels to the AI loops that are gaining popularity. Instead of single AI prompts for features, people are converging on multi-step processes like Plan -> Question -> Execute -> Validate, generating text artifacts in between. This is a good sign that AI can be useful beyond the easier Intuition bugs.

So far I've found:

- AI is very good at questioning the initial assumptions (Practice One).

- It can be used in an iteration step to test a thesis or of course sometimes get to the root cause immediately.

- It can help come up with ways to make reproduction cheaper (Practice Three) or identify future tool improvements when reflecting (Practice Five). It depends on how much context the AI has on existing tooling.

- AI gets stuck too. Keep in mind the goal of being efficient at debugging by always making forward progress. Unsuccessfully iterating on a prompt to help unstick an AI can be extremely unproductive. Unstick yourself instead.

- When an AI works it can be an excellent teaching tool. Review its successful reasoning to improve your own skill (Practice Five).

AI has not replaced or automated out the need to understand how our own programs fail. For harder Evidence bugs, I expect using a reliable and consistent process will become more important than ever for AI prompting.

Conclusion

Remember the jitter bug from the beginning? After my initial attempts stalled out, I took a step back and wrote down my understanding from scratch. I enlisted beta testers running a build with more verbose logging. I added cheats to test scenarios faster. Then I started eliminating systems that could impact player position, bisecting the search space with each short investigation.

It led me to somewhere none of us had considered (but should have!), our network serialization code. The root cause was an edge case that could set velocity to a garbage value, causing jitters or teleportation on the client depending on the player's connection.

The meta-skill of recognizing the class of bug and switching approaches is not talked about enough. Most programmers naturally level up their techniques, debug tooling, and bug fixing stamina in their first few years. However, they hit a wall which I'd argue is more related to a jump in process and not necessarily their years of experience. If you've hit a plateau at bug fixing, try it out.

Start by asking yourself, what kind of bug is this?